5-Step Guide to AI Fraud Detection Banks 2026

Read by leaders before markets open.

The IMF's April 2026 technical note carries a specific warning: banks hoarding transaction data inside siloed AML systems are losing the fraud fight, and losing it expensively. Legacy AML alert systems generate false positives on 90% to 95% of flagged transactions, according to industry data cited by TheStreet, and a consortium model built on federated learning cuts that rate by 40% to 60%, according to Backbase and Emburse research.

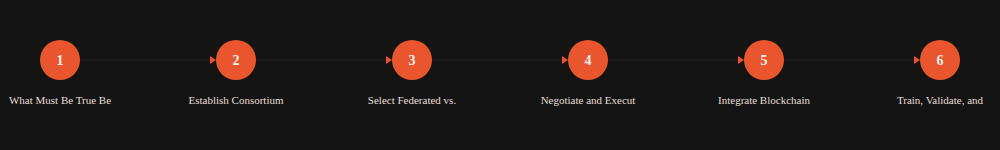

This guide is for the COO or chief compliance officer whose institution has already decided to join or form an inter-bank fraud consortium. It covers five sequential steps, the failure scenarios that derail most deployments, and explicit go/no-go checkpoints before full production rollout.

Step 0: What Must Be True Before You Start

Four preconditions must hold before your institution commits engineering resources or signs a consortium agreement.

Regulatory pre-approval is in place. Your consortium AI system almost certainly qualifies as high-risk under the EU AI Act. The August 2, 2026 deadline requires conformity assessments to be completed, technical documentation finalized, and registration in the EU high-risk AI database confirmed, according to GDPR Register. In the US, FinCEN must be notified of any shared data arrangement that touches suspicious activity report (SAR) workflows. Skipping this step does not delay compliance; it creates retroactive violations.

Your data is anonymizable to an auditable standard. Consortium members must agree on a single anonymization protocol before any data flows. Pseudonymization under GDPR Article 4 is the floor, not the ceiling. Transaction identifiers, account numbers, and counterparty names must be replaced with tokenized equivalents that no single consortium participant can reverse-engineer. If your core banking system does not support real-time tokenization at ingress, that infrastructure gap must close before Step 1 begins.

Internal model governance is in place. The consortium aggregates learning across institutions, so each institution's local model must already meet internal validation standards. The SR 26-2 guidance on GenAI model risk management applies here. If your local model has not passed a model risk management review, contributing its gradients to a federated consortium introduces unquantified risk. See our breakdown of SR 26-2 GenAI model risk management requirements before proceeding.

Legal counsel has reviewed the data governance contract template. The consortium agreement defines data ownership, liability allocation when a model error causes a false arrest or regulatory action, exit rights, and IP ownership of the jointly trained model. Institutions that sign consortium agreements without bespoke legal review inherit other members' compliance failures.

Step 1: Establish Consortium Governance Structure

What to do: Appoint a consortium steering committee with one voting representative per member institution. Define decision thresholds: which decisions require unanimity (new member admission, model architecture changes) versus simple majority (KPI targets, reporting cadence). Hire or designate a neutral consortium administrator, either a third-party fintech such as Rhino or a rotational chair among members.

Why it matters: Without explicit voting rules, a single member's objection can block a critical model update during an active fraud campaign. The Rhino consortium model, highlighted at the AML and FinCrime Tech Forum USA in April 2026, works because compliance officers can trace recommendations back through their own local model's logic. That traceability depends on governance rules being documented before the first model gradient is shared.

Watch for: Founding members claiming permanent veto rights. This structure collapses when smaller banks need to exit and the exit mechanics are undefined.

Time estimate: 6 to 10 weeks. Who does it: General counsel, chief compliance officer, COO.

Step 2: Does AI Data Governance Banking Framework Determine Which Architecture Banks Can Legally Use?

Yes. For any consortium with EU members, the AI data governance banking framework directly determines architecture choice: federated learning is the only option consistent with GDPR, because centralizing transaction records creates a data controller relationship requiring explicit legal basis for each transfer. Federated architectures keep raw data inside each institution and transmit only model gradients across the network, eliminating that exposure entirely.

What to do: Evaluate two architectures against your regulatory constraints. Centralized pooling sends anonymized transaction records to a shared data lake, where a single model trains on the combined dataset. Federated learning keeps raw data inside each institution; only model gradients (mathematical updates, not records) travel across the network.

Why it matters: A cross-institutional study published in MDPI (2026) found that federated graph attention network models across five virtual institutions achieved performance gains exceeding 15% while eliminating negative transfer effects from simple averaging approaches. The regulatory and performance case for federated learning is now aligned.

Watch for: Vendors pitching "federated" architectures that actually transmit more than gradients. Audit the data flow diagram before signing any contract. If the vendor cannot produce a data flow diagram, that is your answer.

Time estimate: 4 to 6 weeks for architecture decision and vendor selection. Who does it: CTO, chief data officer, external privacy counsel.

AML False Positive Rates: Legacy vs. Consortium AI

The 35% false positive rate achievable with consortium federated ML represents a 62% reduction from legacy rule-based baselines. HSBC's Dynamic Risk Assessment deployment achieved a 60% false positive reduction, according to Emburse, using a comparable layered architecture within a single institution rather than a consortium.

Step 3: Negotiate and Execute Data Governance Contracts

What to do: Draft bilateral data sharing agreements between every pair of consortium members, then a master consortium agreement that supersedes them. The master agreement must cover: data minimization obligations (only fraud-relevant features, not full transaction records), breach notification timelines (72-hour standard under GDPR), model version control and rollback rights, liability caps per incident, and IP ownership of the jointly trained model weights.

Why it matters: This step is where most consortiums stall or fail. The EU AI Act's Article 6 obligations require that high-risk AI systems have documented data governance procedures. If your consortium agreement does not specify which institution owns the training data provenance records, a regulator examining a false positive decision has no clear audit path. For a deeper read on those compliance costs, see our EU AI Act compliance banking Article 6 breakdown.

Watch for: Agreements that assign joint and several liability across all members for any member's model error. Negotiate proportional liability based on data contribution volume.

Time estimate: 8 to 16 weeks (longer if members span multiple jurisdictions). Who does it: General counsel, data protection officer, COO.

KEY TAKEAWAY: Data governance contracts, not the AI architecture, are the primary cause of consortium deployment delays. Budget 16 weeks minimum for multi-jurisdiction agreements, and assign a dedicated project manager to track redline cycles across member institutions.

Step 4: Integrate Blockchain Audit Trails

What to do: Deploy an immutable audit ledger that records every model inference decision: the input feature set (anonymized), the output risk score, the model version, and the timestamp. The ledger stores decision metadata, not transaction records. Permissioned blockchain architectures, with Hyperledger Fabric the dominant choice in financial services, allow each consortium member to write to the ledger while preventing any single member from altering historical entries.

Why it matters: Regulators examining a SAR filing need to verify that the AI flag driving the report was generated by an approved model version, using approved input features, at the time of the transaction. An immutable ledger satisfies that requirement. AML Network documentation confirms that institutions must maintain audit trails including risk scores and rationale logs per jurisdiction, retained for the period required by local law, typically five to seven years. A mutable database does not meet this standard because opposing counsel or a regulator can always question whether records were altered.

Watch for: Audit ledgers that log at batch rather than transaction level. If the ledger records only daily summaries, it cannot satisfy per-decision audit requirements during a regulatory examination.

Time estimate: 10 to 14 weeks for ledger deployment and integration testing. Who does it: CTO, compliance officer, blockchain infrastructure vendor.

Consortium AI Deployment Timeline (Weeks)

The cumulative timeline lands at approximately 56 weeks from kick-off to validated production model, assuming no regulatory approval delays. Institutions that compress Step 3 (data contracts) below eight weeks consistently encounter renegotiation cycles that extend the total timeline by four to six weeks.

Step 5: Train, Validate, and Set Shared KPIs

What to do: Run federated model training across consortium members for a minimum of three monthly rounds before any production inference. After training, validate the consortium model against each member's holdout dataset independently. Agree on shared KPIs before go-live. Core metrics include: false positive rate (target below 40%, measured monthly), true positive rate on confirmed fraud cases (target above 85%), SAR filing accuracy (measured quarterly), and alert-to-investigation conversion rate.

Why it matters: Without pre-agreed KPIs, members measure success on incompatible baselines and exit the consortium when their internal metrics diverge from a neighbor's. Banks executing consistent layered ML architectures report reductions in false positives and 50%-plus reductions in manual review costs, according to Backbase research. Those outcomes require shared measurement, not individual reporting.

Watch for: Members weighting KPIs toward metrics that favor their own transaction mix. A retail-heavy bank optimizing for card fraud detection will diverge from a corporate bank focused on wire transfer anomalies. The consortium model must cover both, and both must appear in the shared KPI dashboard.

Time estimate: 12 to 16 weeks for three training rounds plus validation. Who does it: Data science leads at each member institution, coordinated by the consortium administrator.

How Does AI Fraud Detection Banks 2026 Consortium Governance Deadlock Happen, and How Do You Prevent It?

Governance deadlock in AI fraud detection bank consortiums occurs when voting rules require unanimity and a single member's legal team blocks a time-sensitive model update. A consortium member detects a new fraud pattern and submits a proposed model update. A second member's legal team flags a potential GDPR data minimization concern with the new features. The fraud pattern spreads for six weeks while lawyers negotiate.

The fix is to pre-define an emergency update protocol that allows majority-vote model updates for active fraud campaigns, with retroactive full-committee review within 30 days.

What Causes Silent Data Quality Degradation in Federated Consortiums?

Silent data quality degradation occurs when one member upgrades its core banking system mid-deployment. The new system formats transaction timestamps differently. Federated gradients from that member introduce systematic noise into the consortium model, and no alarm fires because overall accuracy degrades slowly rather than suddenly.

The fix is to implement automated data quality monitoring at each member's local gradient generation step, with drift alerts sent to the consortium administrator within 24 hours of detection.

A third common failure is regulatory examination mismatch. A regulator examines Member Bank A's SAR filings and requests the full decision audit trail for 12 flagged transactions. The blockchain ledger holds the metadata, but the human-readable explanation of which features drove each risk score lives in Member Bank B's explainability layer. Bank A cannot produce the required documentation without requesting access from Bank B, which takes three days and triggers a cross-border data transfer log. Each member must maintain a local copy of the explainability layer output for every decision it acts upon, separate from the consortium ledger.

For a broader look at single-institution fraud AI deployment pitfalls, the AI fraud detection deployment pitfalls guide covers the institutional prerequisites that also apply in consortium settings.

Success Metrics

Primary metric: AML false positive rate, measured monthly. Target: below 40% within six months of production go-live, from a typical legacy baseline of 90% to 95%.

Secondary metrics: True positive rate on confirmed fraud cases (target above 85% at 90-day mark); SAR-to-confirmation rate (target above 30%); manual review hours per 1,000 alerts (target 50% reduction versus pre-consortium baseline at 180 days).

Leading indicators (weeks 1 to 8): Data quality scores per member, gradient contribution volume per round, ledger write latency.

Lagging indicators (months 3 to 6): False positive rate, investigation conversion rate, regulatory examination outcomes.

Decision Checkpoint

Proceed if: All four prerequisites are satisfied, regulatory pre-approval is in writing, data governance contracts are fully executed across all founding members, and the blockchain audit ledger has completed a 30-day pilot with zero data integrity failures.

Stop and reassess if: Any founding member has not completed an internal model risk management review of their local model; the consortium agreement contains joint and several liability language that has not been renegotiated to proportional liability; or the chosen architecture requires raw transaction records to leave any member institution's environment.

Escalate to board level if: The consortium spans EU and non-EU members and the cross-border data transfer legal basis has not been confirmed in writing by data protection counsel in each jurisdiction.

The Verdict: When to Proceed and When to Stop

Proceed with consortium deployment once your institution has cleared the regulatory pre-approval gate and executed data governance contracts. The false positive reduction is material and measurable. The IMF's April 2026 guidance gives compliance officers a credible reference document to present to their boards. Do not proceed if data contracts remain unsigned or if your local model has not passed a model risk management review. A consortium amplifies whatever model risk each member brings in.

The next 12 months will test whether regulators enforce the EU AI Act's August 2026 high-risk AI registration deadline on consortium systems or treat them as shared infrastructure with looser obligations. Watch the European AI Office's guidance on multi-party AI systems, expected in Q3 2026. That guidance will determine whether consortium operators face one conformity assessment per system or one per member institution.

Frequently Asked Questions

Q: How long does it take to deploy an inter-bank AI fraud detection consortium?

A full deployment from governance setup to validated production model takes approximately 56 weeks, assuming no regulatory approval delays. The longest single phase is data governance contract negotiation, which runs 8 to 16 weeks for single-jurisdiction consortiums and longer for cross-border groups.

Q: What is the difference between federated and centralized consortium architectures for AML?

In a centralized architecture, anonymized transaction records leave each institution and pool in a shared data lake for model training. In a federated architecture, raw data stays inside each institution and only model gradients travel across the network. For EU-member consortiums, federated learning is the only GDPR-compliant option.

Q: What KPIs should an inter-bank fraud consortium track after go-live?

The primary KPI is monthly AML false positive rate, with a target below 40% within six months, down from a legacy baseline of 90% to 95% per TheStreet. Secondary KPIs include true positive rate above 85% at 90 days, SAR-to-confirmation rate above 30%, and 50% reduction in manual review hours at 180 days.

Q: Why do most fraud detection consortiums fail after initial deployment?

Three patterns account for most post-deployment failures: governance deadlock when voting rules require unanimity, silent data quality degradation when a member upgrades its core banking system without updating gradient formatting, and regulatory examination mismatches when explainability data held by one member is needed by another. Each has a documented fix detailed above.

Q: Does the EU AI Act apply to inter-bank fraud detection consortiums?

Yes. Inter-bank AI fraud detection systems almost certainly qualify as high-risk AI under the EU AI Act, per GDPR Register. The August 2, 2026 deadline requires conformity assessments, finalized technical documentation, and registration in the EU high-risk AI database. The European AI Office is expected to publish specific guidance on multi-party AI systems in Q3 2026.

Sources

- PYMNTS, "IMF Says AI Can Win Fraud Fight if Banks Start Sharing Data." pymnts.com

- AML and FinCrime Tech Forum USA, "Inside Rhino's Push to Make Privacy-Preserving AML Collaboration Work." fintech.global

- MDPI Symmetry, "Cross-Institutional Financial Fraud Detection via FedGAT." mdpi.com

- Emburse, "AI Fraud Detection in Banking 2026 Guide." emburse.com

- Backbase, "Bank Fraud Prevention 2026: What Works and What Fails." backbase.com

- GDPR Register, "EU AI Act Compliance 2026 Timeline." gdprregister.eu

- AML Network, "What is Blockchain AML Compliance." amlnetwork.org

- FluxForce, "AI Fraud Detection: ROI Banks Are Seeing in 2026." fluxforce.ai

D&B-Claude Deal: AI AML Compliance Banks Must Validate

AI AML compliance banks face in 2026: D&B's Claude integration delivers real ROI, but SR 11-7 gaps and EU AI Act rules demand 5 validation checkpoints first.

Basel III's ML Credit Scoring Gap: EU AI Act Compliance

Banks face a 13-point AUC gap between explainable and black-box credit models. See how JPMorgan, HSBC, and Barclays meet EU AI Act compliance without sacrificing accuracy.

6-Step Fintech AI Regulation 2026 Banking Playbook

Fintech AI regulation 2026: deploy compliant banking AI agents before the August 2 EU AI Act deadline. 6-step playbook with vendor scores, costs, and go/no-go criteria.